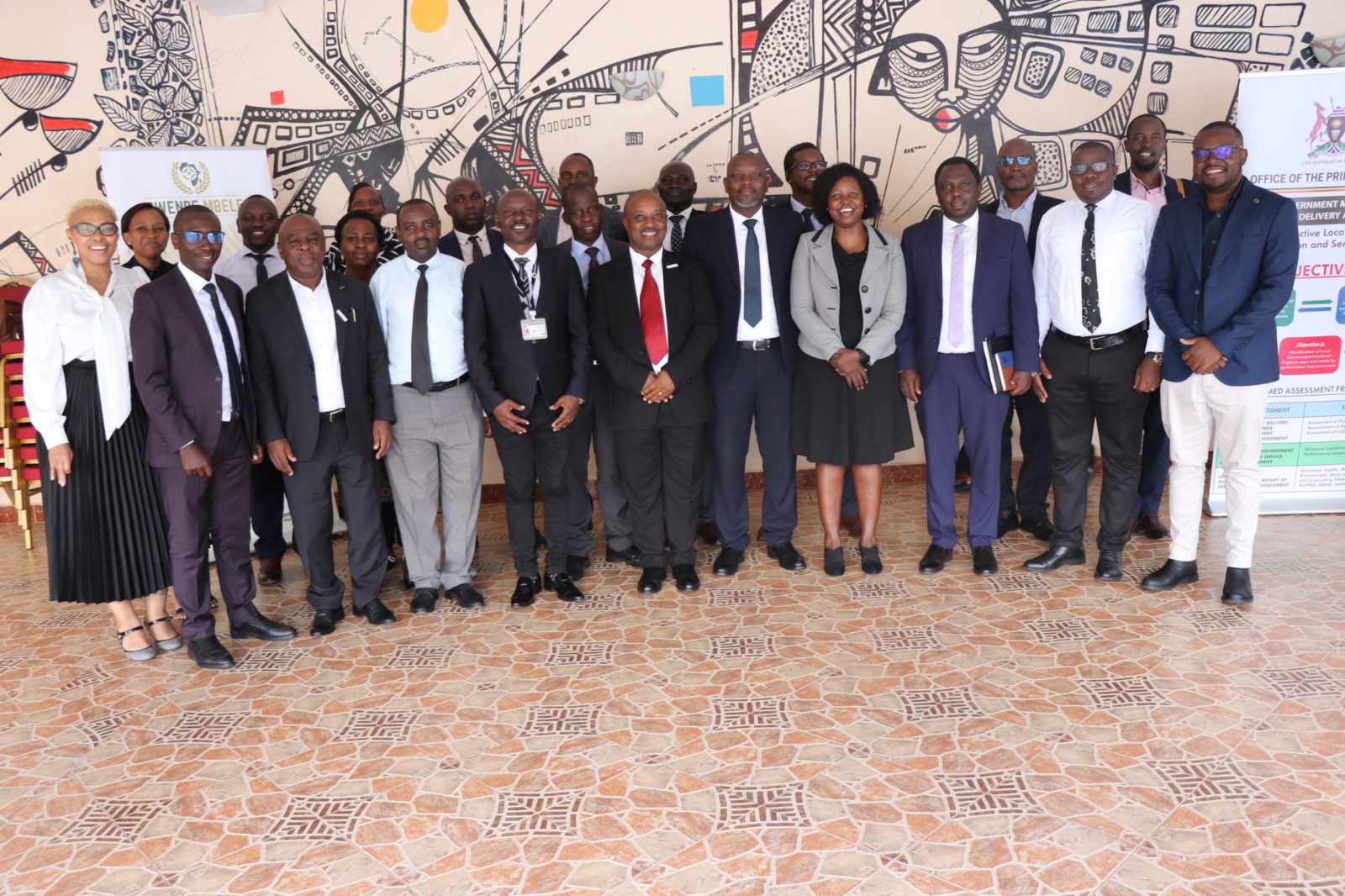

Public Sector National Monitoring & Evaluation Policy Review Workshop in Uganda

Public Sector National Monitoring & Evaluation Policy Review Workshop On the 28 April to 2nd May 2025, Twende Mbele was invited to the Public Sector National Monitoring & Evaluation Policy Review Workshop in Kampala, Uganda. This workshop was hosted by the Office of...

Rapid Evaluations in Uganda: The view of a Team Leader

Dr James Wokadala

Former Chair, Department of Planning and Applied Statistics, Uganda

Introduction: I was a team leader on two rapid evaluations in Uganda. These evaluations included:assessing...

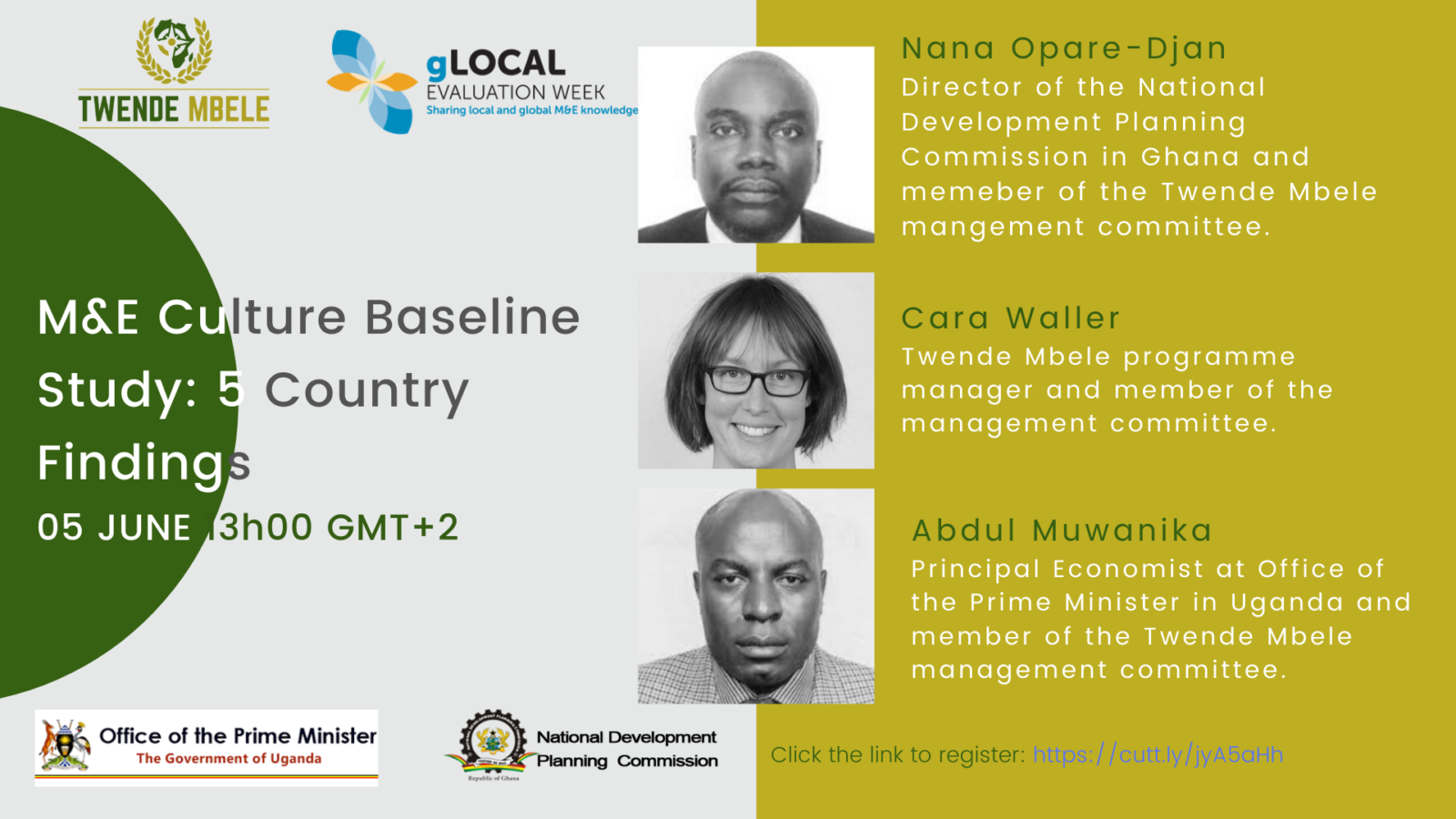

Second annual gLocal Evaluation Week – 2020

With the ten-year countdown to the Sustainable Development Goals underway, Systems to evaluate the impact of policies and monitor their progress are more important than ever. To sustain the momentum of global efforts to promote monitoring and evaluation capacity the...

CreativeBox

CreativeBox