25 Years of the Uganda Evaluation Association: Reflections from Uganda Evaluation Week 2026

By Parfait Kasongo

Senior Communications Coordinator, Twende Mbele

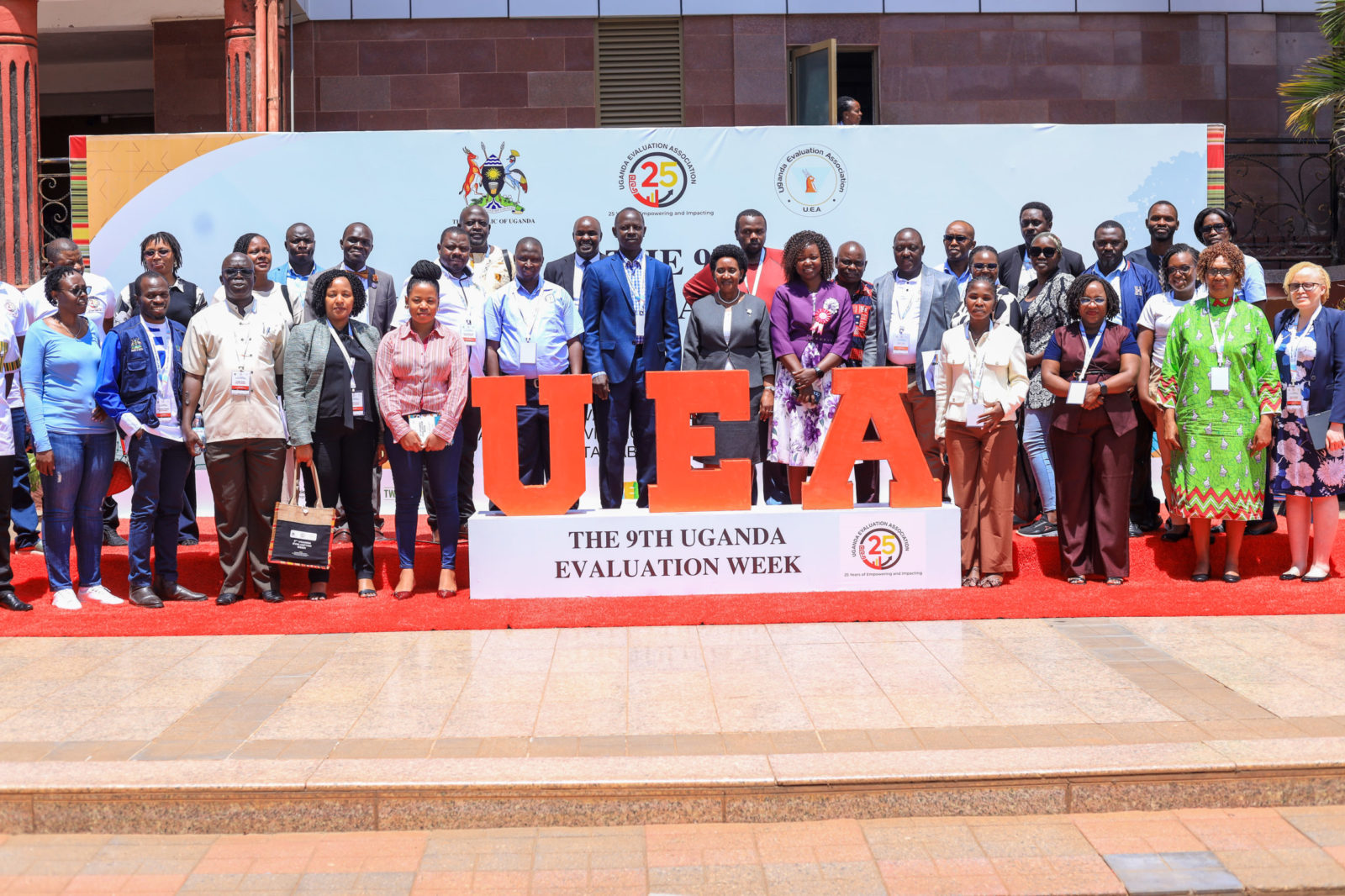

The 9th Uganda Evaluation Week (UEW) took place from 4–8 May 2026 at Silver Springs Hotel in Kampala, bringing together...

Using AI in Evaluation: What’s Changing, What Isn’t, and What Matters

By Amanda Deuchars

Learning Coordinator, Twende Mbele

Setting the Scene: A High-Demand Training in DakarA recent training in Dakar, hosted at the Centre Africain d’Études Supérieures en Gestion...

Tanzania Adds Extra Skills to Evaluation Experts Assessing ASDP II Projects

THE Tanzanian government has embarked on evidence-based decision-making by equipping monitoring and evaluation specialists with skills to assess the impact of agricultural programmes under the Agricultural Sector Development Programme Phase Two (ASDP II).This is being...

Twende Mbele Communitions Feedback Survey

Twende Mbele Communications Feedback SurveyHelp us improve how we share information and connect with you, please take 5-7 minutes to complete this survey. Your feedback is invaluable.”Click the link for the...

South Africa’s Cabinet Approves the Revised National Evaluation Policy Framework (NEPF)

By Thokozile Molaiwa

Learning Coordinator, Twende Mbele

Yesterday, marked an important milestone for evidence-informed governance in South Africa, with Cabinet approving the revised National...

CreativeBox

CreativeBox