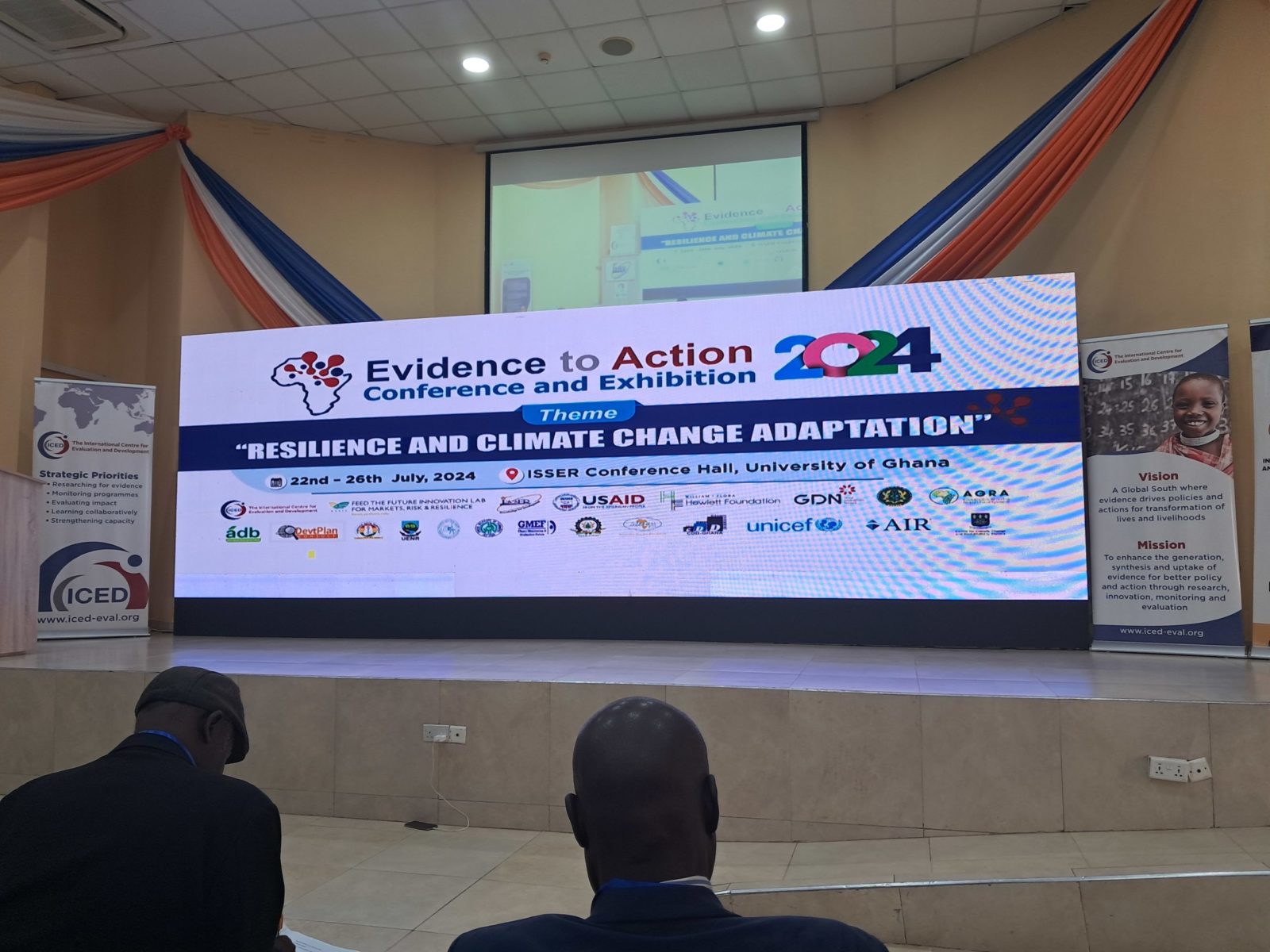

Evidence to Action Conference 2024 – Accra, Ghana

Evidence to Action Conference 2024 – Accra, GhanaOn the 22 – 26 July 2024, Twende Mbele attended the 7th Evidence to Action Conference at the ISSER Centre in Accra Ghana. The theme of the conference was “Resilience and Climate Change Adaptation”explored the...

Twende Mbele Capacity Building Workshop – Johannesburg, 2024

Twende Mbele Capacity Building Workshop – Johannesburg, July 2024The purpose of the 3-day capacity building workshop was to build and augment existing M&E capacities among public officials of the Twende Mbele member governments: Benin, Ghana, Kenya, Niger, South...

Atelier de renforcement des capacités au Niger

Atelier de renforcement des capacités au Niger -Dosso, Juillet 2024Le Niger, comme de nombreux pays et organisations, accorde une grande importance au développement de la pratique de l’évaluation des actions de développement et des politiques publiques. C’est dans...

Call for Proposal – Consultant Needed for Review of NEPF

Consultant NeededTo conduct a review to understand whether the 2019 National Evaluation Policy Framework (NEPF) is relevant, effective and promotes credible as well as quality evaluations aligned to government goal of assessing performance of programmes, plans and projects....

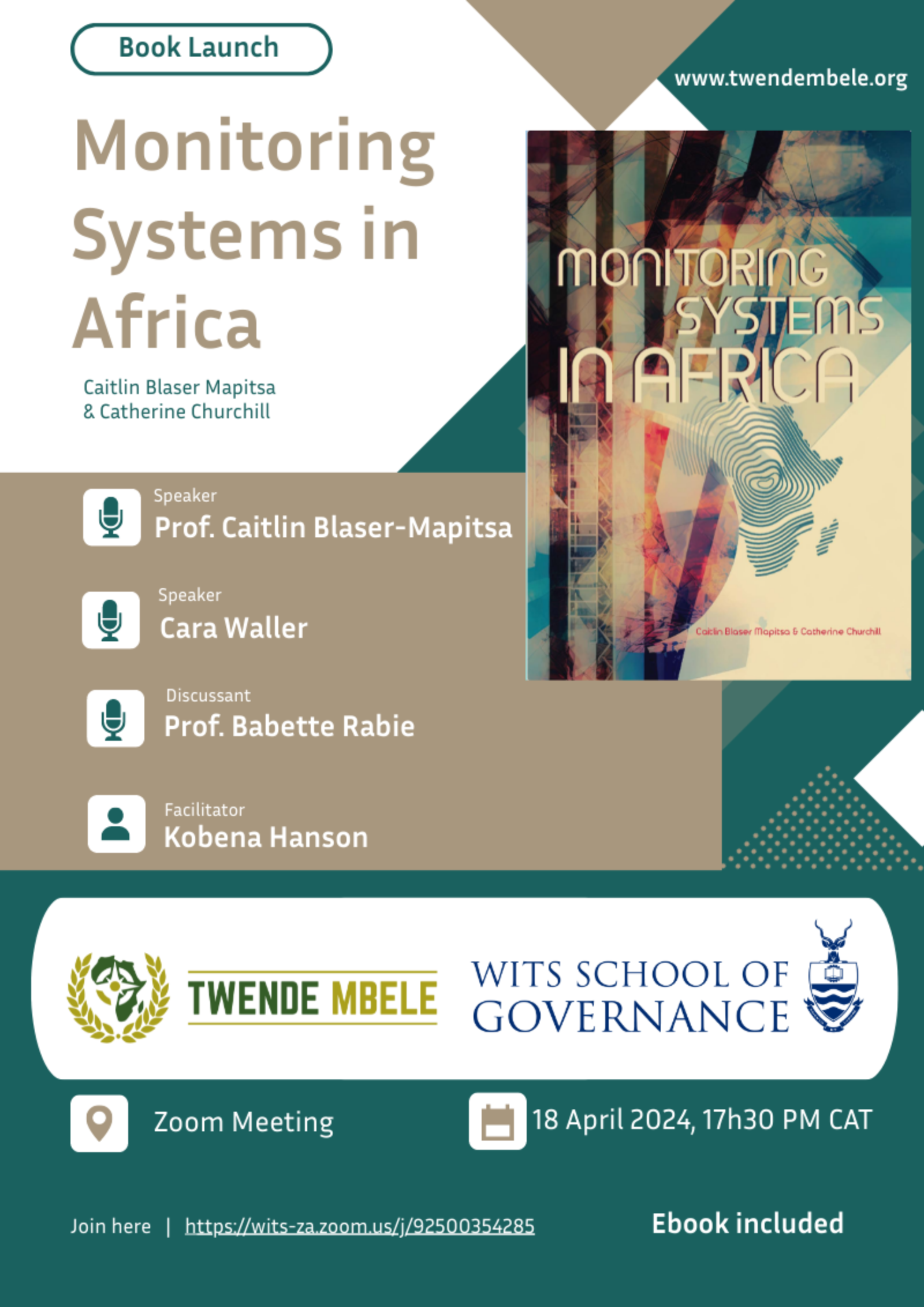

Monitoring Systems in Africa Book Launch

Monitoring Systems in Africa Book LaunchJoin us for a virtual launch of the ‘Monitoring Systems in Africa’ book, edited by Caitlin Blaser Mapitsa and Catherine Churchill.This volume of the book presents a holistic approach to monitoring systems. This includes a...

CreativeBox

CreativeBox